Blogs

Breaking out of Reverse-Engineered Planning for Future Complex Security Challenges

by : Ben ZweibelsonOriginal blog post can be found here: https://benzweibelson.medium.com/breaking-out-of-reverse-engineered-planning-for-future-complex-security-challenges-a5c0b7772f92

This post is a revisit to a strategic design article published back in 2015 in the British military journal Defence Studies (Routledge, 15:4, 2015). In the original article, I present how militaries and security-oriented organizations approach complexity through a systematic, linear-casual logic where we end up reverse-engineering all of our activities as if we might control and predict what cannot ever be. Below, I paste select excerpts from that article that readers might pair to the many discussions and reflections on the last 8 months of chaos, collapse, and confusion in Afghanistan as well as other unexpected (and poorly predicted) events such as the Russian invasion of Ukraine, ‘Great Power Competition’ and more. Our defense institutions appear frustrated and trapped clinging to outdated or irrelevant conceptual frames for making sense of complex security contexts… and are still often unwilling (or unwittingly preconditioned) to consider ideas from beyond the pale. Are we still stuck in this grand illusion that with just enough data, technological advantage and faster processing speeds, we can ‘win’ or defer/defend through oversimplified formulas and checklists?

Note: due to drawing from multiple sources formatted in different notation settings as well as how Medium organizes pasted citations, the numbering repeats in a 1,2,3…1,2,3 or the citation is referenced incomplete in APA. The original source documents have the correct citations for those interested.

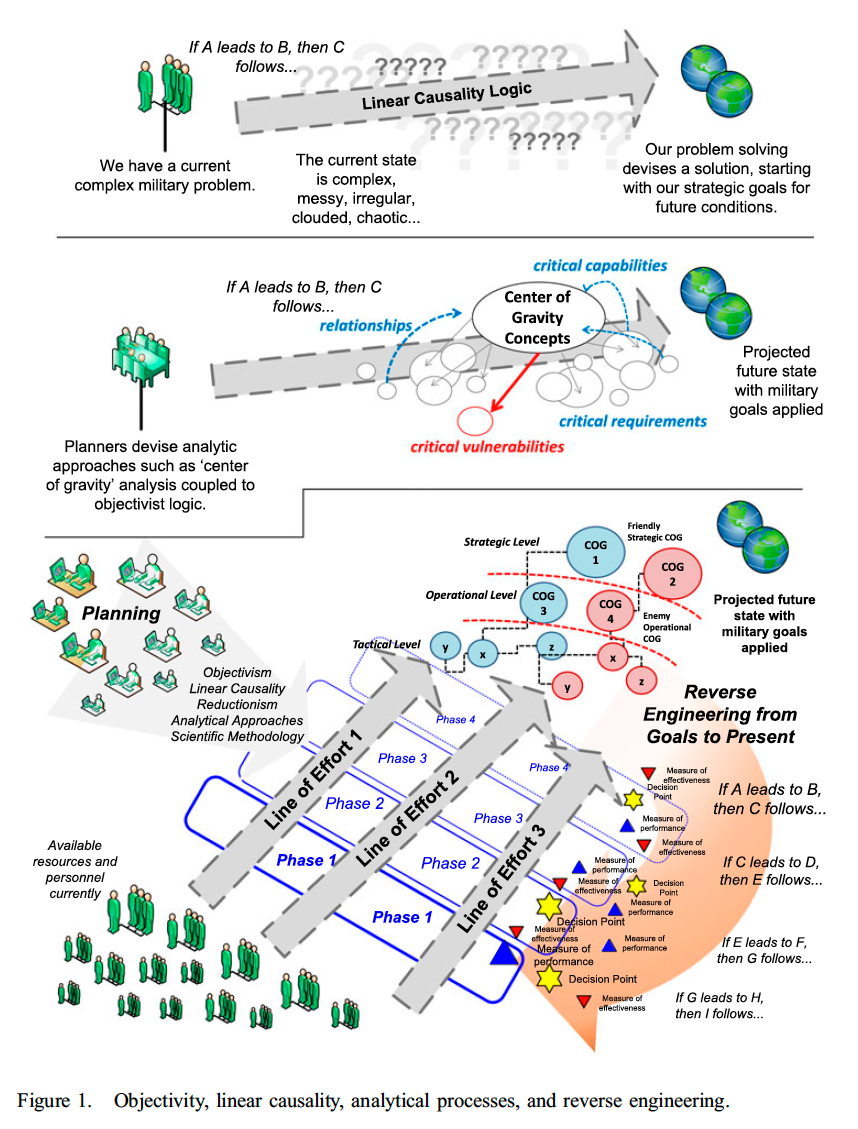

In the above graphic from the article “One Piece at a Time: Why Linear Planning and Institutionalisms Promote Military Campaign Failures”, I attempt to depict to readers how military organizations make decisions in their planning methodologies (NATO-OPP, JPP, MDMP, MCPP, and so-on). We attempt to clarify our desired ‘end state’ or strategic goals in the future, and subsequently pair the perceived ‘problem’ or problems preventing us from immediately reaching said goal… and those problems are associated with institutionally sanctioned and validated ‘solutions’ maintained within our curated knowledge for warfare/defense/security affairs.

Once we complete the ‘end-state’, ‘problem-identification’, ‘solution known to solve said problem’, we use this systematic logic to complete the circuit and plan in reverse-order our activities along sweeping ‘lines of effort’ or even ‘physical lines of operation’ if we are really fixated on geo-physical constructs. ‘Systematic’ means that there is some direct, causal and ‘input-output’ correlated relationship that is quantifiable and suitable for analytic optimization (A plus B leads to C). In the passage below from my upcoming strategic design monograph (pending publication in late 2022), I explain this further:

Convergent, systematic processes feature a preference toward incremental gain in efficiencies so that clearly defined goals and objectives as originally crafted can be reached faster and with lower strain on resources and organizational interest. Systematic logic functions with inputs linked to clear outputs, and where linear-causal relationships work mathematically, even mechanically to sequence discrete and reducible activities across time and space to lead toward overarching objectives and goals.[1] Modern planning in this mindset seeks maximum efficiency at the expense of imposing closed system logic, where “time must be seen as merely metric, not productive of novelty.”[2] Reality must be able to be frozen, isolated into slivers so that analytical optimization can work, and in that reductionism the methodology move forward or backward on the timeline using enduring formulas and principles of governing how things happen.[3] “Thus, a closed system is one which is conceptualized so that it has no interaction with any element not contained within it.” Isolating complex warfare through categorization, rule-setting, and analytical optimization closes that element off from the larger system.

Thus, systematic logic is the organizing structure for nearly all military decision-making at a deep (philosophically, this is where ontology and epistemology are found) and usually an institutionally blinded level. By blinded, I mean that in social paradigm theory, organizational theorists acknowledge that usually, the people using a social paradigm (we all are using one; we cannot ‘step outside of our paradigm’ unless we fall into the warm embrace of another social paradigm) are unwittingly or even unwillingly trapped in using them. The unwitting quality is when we think and act non-reflectively; in that we do things over and over and challenge the methodology or the practitioner to modify things to get the results we expect, but we cannot imagine challenging beyond our methods and into the social paradigm frame operating around us like an invisible soup.

The unwilling aspect is that most people using a social paradigm have what organizational theorists term ‘paradigm incommensurability’ in that we cannot ever acknowledge or tolerate another paradigm (very different way of viewing complex reality entirely dissimilar from our own) and thus, two people using different social paradigms will talk past one another, never to agree or even respect the intellectual or conceptual frameworks outside our own socially constructed boundaries. What does this mean in practice? Military professionals unwittingly use their decision-making methods over and over because they are trained to do this- but we cannot challenge things such as ‘ends-ways-means’, ‘centers of gravity’, or even ‘levels of war’ because we are programmed never to challenge such things or risk being accused of being a heretic (or worse). If strategic designers offer up alternative concepts (such as postmodern ideas, or concepts from complexity theory, systems theory, or elsewhere outside the mainstream military technical-rationalist framework of reductionism and analysis)- those that are unwilling will dismiss such ideas as pointless, irrelevant, or too radical to contemplate seriously. In the passage from the ‘one piece at a time’ article below, I expand on these themes with:

With the reverse engineering perspective on planning, time and space becomes a linear and malleable construct we can control and reduce, where we look for causality with “if A leads to B, then C” logic for planning. Almost like a movie on tape, we can fast-forward or reverse, adding and subtracting elements as necessary. Taken further, we actually reverse engineer along this linear timeline by setting in the uncertain future our goals and objectives, with lines of effort leading back to our present state. Upon this cognitive structure, we reverse engineer all of the phases, decision points, decisive points, branch plans, and metrics (measures of performance/effectiveness) starting at our goals and leading back to the present. Campaign plans are written in this manner, and with every major review, our planning output retains this same implicit structure while accepting the same justifications on “how the world and warfare works.” This is how the modern western military makes sense of the past, present, and future of warfare (Bruner 1986, p. 96, Marion and Uhl-Bien 2003, pp. 56–57, Kelly and Brennan 2010, p. 110, Paparone 2012, p. 36, Schmidt 2014, p. 51). Once our campaign plan is in place, we cycle units and resources while trudging along, expecting the overarching strategy to eventually bear fruit. Applied to simple and even complicated problems, it tends to work remarkably well. The only boot prints on the moon came from NASA; the atomic bombs that changed the world in 1945 came from a similar mass-organized industrial endeavor, and the East India Company dominated global trade spanning three centuries. There are countless other examples of centralized hierarchies dominating all competitors. Yet complex environments, highly resistant to such processes, reject centralized efforts to control and predict outright (von Bertalanffy 1968, Weinberg 1975, Holland 1992, p. 17, Gharajedaghi 2006, Bousquet 2008, Bousquet 2009). (pp.363–364 in original article by the author)

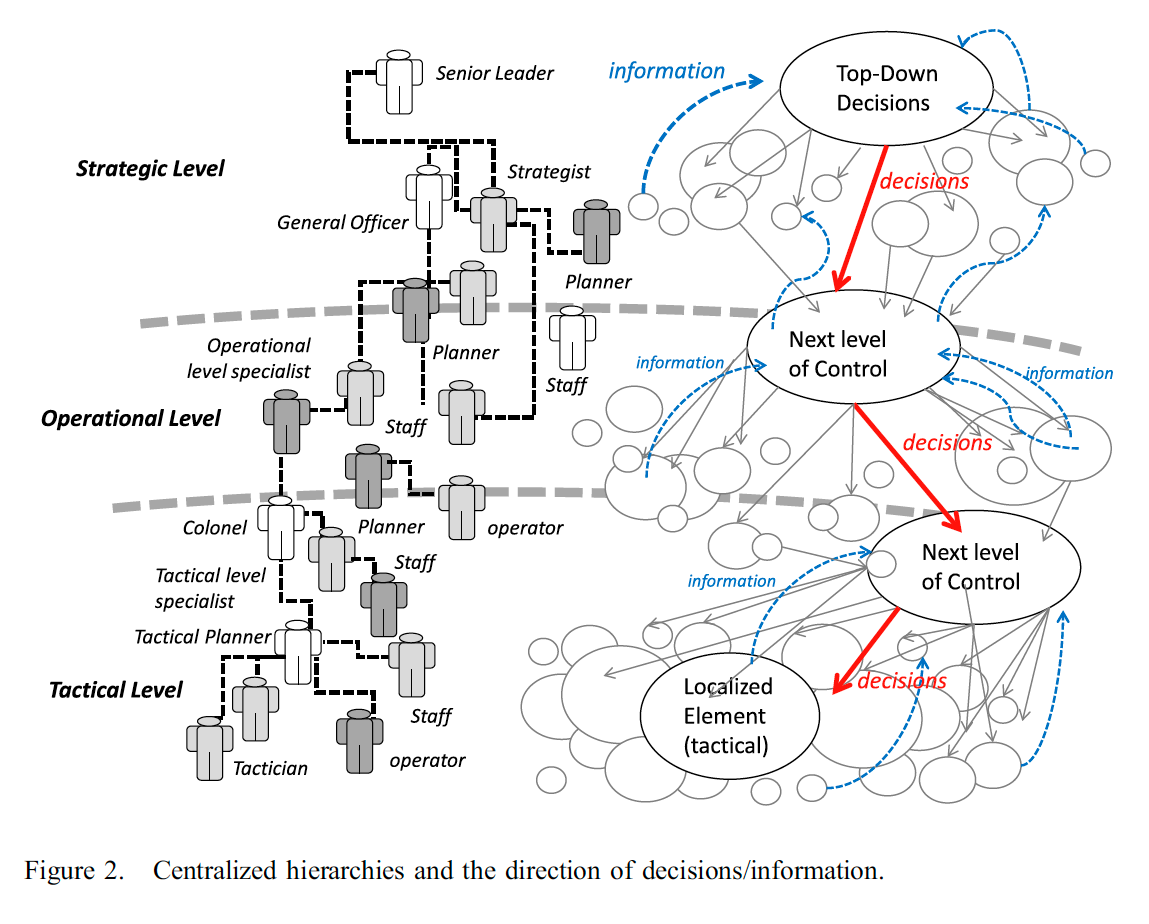

In another graphic from this article, I present what is likely not just the most familiar way modern military forces and security organizations conceptualize their organizations and how warfare works, but it is often (sadly) the only way they would entertain reality to ever be for such things…in that often proponents of this worldview dismiss anything that does not support their belief system as irrelevant, anecdotal, or ‘unscientific’. This again is that institutionalized social paradigm incommensurability with anything beyond the socially constructed limits. As one example to illustrate this, across the modern military community of practice (and beyond), we agree to conceptualize three levels of war arranged in a centralized hierarchy within which our military organization mirrors with command authority, position, status, and flow of information (upward) and decisions (downward).

This is nested within a systematic logic of inputs leading to outputs; where ends must be preconfigured to link with well-established ways and means, so that linear-casual lines of effort can be arranged in a clear, predictable and stable formulaic arrangement of goals and effects. Science is kidnapped by the military so that a pseudo-science is produced, where all of the conceptual models (levels of war, centers of gravity, ends-ways-means simplistic relationships, lines of effort, measures of performance/effectiveness and so-on) are believed and defended in what is more of an ideological instead of a self-critical scientific ethos. Science first admits “I do not know X…therefore I desire to experiment to learn and share with my community so they collectively might test, challenge or use what I contribute”, while military pseudo-science does very little of this. The centralized hierarchy exists for control, predicability, rigid obedience, uniformity, indoctrination, the illusion of order and stability (in the chaos of war), and a mimicry of scientific process in exchange to gain what science provides societies. This is not an equal exchange, in that pseudo-science brings much institutional baggage.

From that aforementioned design monograph pending publication, I extend several of these critiques on modern military decision-making and it’s dominant war frame (perhaps a social paradigm coupled with deep institutional reflection):

Ideally, one can gain in efficiency (reducing risk, increasing control and prediction) through reducing complexity down sufficiently so that formulas, rules, and established categorization stabilizes what was previously chaotic and confusing whole system. However, the act of becoming more efficient does not correlate directly into becoming more effective in complex systems; effectiveness frequently requires innovation, imagination, and a willingness to break away from the very practices that an organization might be attempting to improve efficiency with. In the first portion of NATO-OPP and JPP, the decision-making methodology seeks to pair the ‘strategic, national or higher headquarters’ command intent with evaluation activities including analysis of the operating environment, assessment of the initiating directive (political or strategic), as well as determinations in time available to plan. Modern military decision-making features a series of staffing sections are aligned with compartmentalized (Prussian styled, specialized/categorized staff functions) actions to determine if the requirement meets certain suitability, existing, capacity, capability, and authority criteria. NATO doctrine states: “the strategic and operational-level commander typically will provide initial planning guidance based upon current understanding of the operating environment and other intelligence products and staff estimates; and other factors relevant to the specific planning situation…other relevant factors include relevant doctrine, lessons identified and ongoing research and concept development.”[1] Joint doctrine parallels this and determines several outputs generated in this initial assessment of strategic guidance for planning. They are: “assumptions, identification of available/acceptable resources, conclusions about the strategic and operational environment (nature of the problem), strategic and military objectives, and the supported commander’s mission.”[2]

There is a clear effort at an epistemological level (how an organization knows how knowledge exists, how methods function and are validated) to take a natural science inspired, laboratory styled approach to quantifiable, objective analysis of security challenges. The bounding of an ‘operating environment’ as something outside or beyond that of the organization (us, our beliefs, values, rituals, subjective and socially constructed frames) demonstrates a military procedural approach to encase the security environment under examination so that like a scientist, the area of inquiry is detached from the scientist (and external variables) until one decides to act upon it in some deliberate (and quantifiable) manner. Intelligence focuses outward, using analytical tools that channel, categorize, isolate, objectify, filter, or sort available information in particular ways that suit military conceptual models within NATO-OPP and JPP processes. Institutionalized military doctrine and historical lessons are prioritized and granted greater validity than untested, unproven, or experimental knowledge and techniques…illustrating the military expectation that complex adaptive systems must become ordered, controlled, and static when military decision-making methodologies are applied to them. Things that worked before are therefore expected to work again in a similar and predictable manner, violating the core concepts of complexity, emergence, nonlinearity, and adaptation. We cannot ever have complete knowledge of complex systems, nor can we remove ourselves from complexity to isolate or enforce some sort of assumed objectivity either.[3]

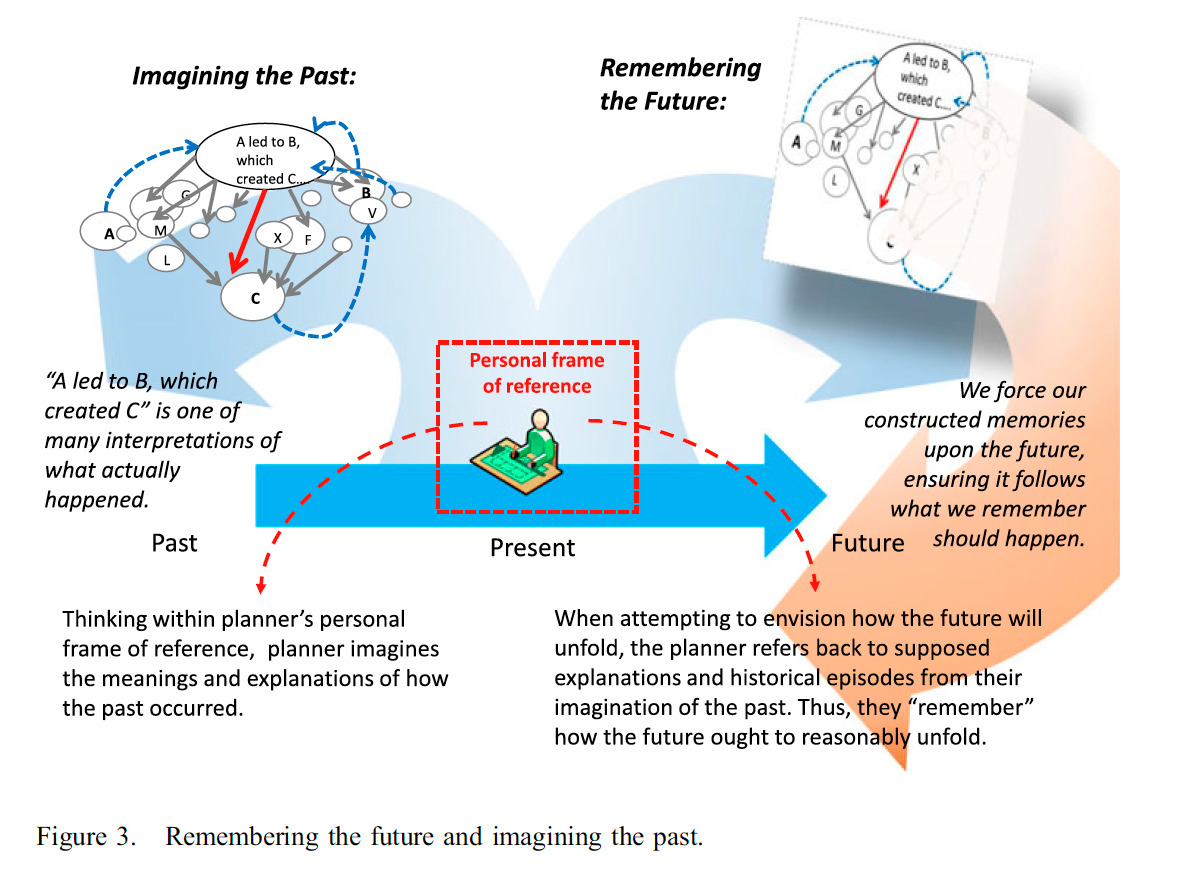

This leads into a deeper point and a military philosophy topic of how and why we view warfare within complex reality. The above graphic is also from the published article in Defense Journal and illustrates the following:

Complex situations reject linear thinking, yet our traditional campaign planning methodology continues to plot along (Conklin 2008, p. 4, Ibrahim 2009, Ryan et al. 2010, p. 249). We publish our extensive campaign plans and subsequently maintaining them through a massive volume of data and metrics that somehow reinforce original concepts (Bousquet 2009, pp. 128–129, Jackson 2013, pp. 52–53). “A does lead to B, which forms C” as we force observations and events to continue to support initial campaign intent and objectives. Sociologist Karl Weick observes, “Bureaucracies see what they have seen before and they link these memories in a sequential train of associations…[They] tend to imagine the past and remember the future” (Tsoukas and Vladimirou 2001, p. 975, Weick 2006, p. 448). Weick illuminates a dangerous output of linear causality, in that our military tends to predict future events based upon flawed reasoning where we misunderstand past events… Linear causality coupled with bureaucratically rigid cognitive processes produces a fantasy of past time with a “memory” imposed upon how the future ought to unfold. Thus, the campaign plan, as a reverse-engineered roadmap for the future, demonstrates how we remember things need to flow so that we reach our goals (Figure 3). (original article, p. 366).

Thus, we tend to be blind (as an institution) to our own conceptual frames while at the same time, we ritualize natural science techniques, methods and models into military pseudo-science, complete with our appropriation and reinterpretation of scientific terms and metaphors into military jargon that is alien to its source context. We do this with ‘complexity’ (often interchanged with ‘complicated’), ‘non-linear’, ‘asymmetric’, ‘chaotic’, ‘emergent’, ‘systemic’, ‘synthesis’, ‘dynamic’, ‘learning system’, ‘irregular’, ‘change agent’, and many other concepts ripped from one scientific discipline so that assimilation can occur and the Newtonian-physics oriented war frame adapt fresh buzz-words.

To close with another passage from the aforementioned monograph pending publication, we can take the popular pseudo-scientific military term ‘emergence’ as it is currently showcased in policy, strategy, operational designs, campaigns, as well as leadership vision statements across the entire defense institution. ‘Emergence’ is forced into a three-century old military frame that is devoid of complexity theory, where Newtonian physics demands the concept adapt to linear-causal, mechanistic and systematic logic instead of following what ‘emergence’ in complexity theory actually means. To truly incorporate ‘emergence’ correctly would require a massive revision of nearly all existing military doctrine, which of course the institution would fight through witting and unwitting advocates of organizational self-preservation:

Emergence is important for design consideration in that the legacy or original context that generated the change does not itself possess the ability to explain it. This means that outside of simplistic and perhaps some complicated systems contexts, the systematic logic of pre-established ‘input-output’ thinking that provides ‘problem-solution’ formation within ‘ends-ways-means’ constructs is flawed. The establishment of a ‘solution’ coupled to a future single ‘end state’ within a complex system implies linear causality where ‘A plus B will lead to C’ systematically so that a military might reverse-engineer all possible problems with known solutions already in their curated knowledge. Emergence denies this (except in closed systems of stable, simplistic settings), where complex systems generate novel developments that cannot be pre-configured, paired with historic solutions, or anticipated and mapped back to direct, linear causations. The modern military emphasis on capturing ‘lessons learned’ and ‘best practices’ highlight another example of this tension between complexity and complicated or simple system behavior.

For example, analytic reductionism can take a glass of water and isolate every water molecule down to individual ones, yet at some unmeasurable point in assembling molecules together, ‘wetness’ emerges from what had previously not been capable of being understood as ‘wet.’ Emergent properties deny the analytic, rationalized tools of prediction, control, as well as description in most cases. NATO and Joint Forces thus must not just think toward the strategic focus or objective for various security activities in terms of political or institutional expectations at present, but inward at the institution and individual strategist’s own logic, belief system, biases, and at how being part of a dynamic and complex system one cannot project one design methodology or model upon all possible emergent contexts. Complex systems are nonlinear; “there is no proportionality between cause and effects. Small causes may give rise to large effects. Nonlinearity is the rule, linearity is the exception.”[1] Yet, modern military forces use methodologies such as NATO-OPP/JPP where all analytical methods express a complex security context in clear, simplistic and linear ‘cause-effect’ relationships. Emergence is discounted in NATO-OPP/JPP, as is nonlinear and emergent phenomena and relationships which again are foundational to how complex systems differ from complicated and simple systems.

The process of emergence deals with this fundamental question in complex systems theory: “how does an entity come into existence whereas previously it did not exist, and the system had no understanding or idea of it?”[2]When emergence occurs, one can observe something such as the appearance of new or unrecognized order, organizational form, or novel structure/action that does not have a clear causal relationship with the earlier system (when the emergence did not yet exist!). Something comes into reality, and observers struggle to explain how this happened while unable to clearly associated inputs and outputs or frame a linear path for the emergent event.

Complex systems are fractal (where irregular forms have strange patterns that appear scale dependent), and no single measurement or equation can ever work beyond specific and temporary contexts; “there is no single measurement that will give a true answer.” It will depend on the measuring device, and how and where one applies it. Complex systems demonstrate what is termed ‘recursive symmetries’ that occur between scale levels; this means “they tend to repeat a basic structure at several [different] levels.”[3] Consider how the spiral swirl of cream in a coffee operates and presents like how hurricanes form, a flock of birds spiral in formation, and how a galaxy rotates despite all of these phenomena being unrelated. NATO-OPP nor JPP do not have any models or theoretical underpinnings to incorporate this, yet modern military forces evaluate and decide on security activities applied to dynamic, complex systems in most every execution.

Emergence is about change, and complex systems reject most all of the systematic logic that modern military decision-making employs. We might close off this post with some choice lyrics from the metalcore band ‘As I lay Dying’- from their song “The Only Constant is Change”:

There is nothing that stays the same

From the foundation of our lives

There is nothing that stays the same

There is nothing to erase time

The only constant is change

Nothing remains the same

The only constant is change

There’s only growth or decay

[1] Paparone, “How We Fight: A Critical Exploration of US Military Doctrine”; Paparone, “Designing Meaning in the Reflective Practice of National Security: Frame Awareness and Frame Innovation.”

[2] Haridimos Tsoukas, “What Is Organizational Foresight and How Can It Be Developed?,” in Organization as Chaosmos: Materiality, Agency, and Discourse, 1st Edition (New York: Routledge, 2013), 263; Antoine Bousquet, “Cyberneticizing the American War Machine: Science and Computers in the Cold War,” Cold War History 8, no. 1 (February 2008): 83.

[3] Klaus Krippendorff, “Principles of Design and a Trajectory of Artificiality,” Product Development & Management Association 28 (2011): 416; Eric Dent, “Complexity Science: A Worldview Shift,” Emergence 1, no. 4 (1999): 5–19.

[1] Ministry of Defence, “AJP-5: Allied Joint Doctrine for the Planning of Operations (Edition A Version 2),” 4–5.

[2] Joint Publication 5–0: Joint Planning, III–6.

[3] Rika Preiser, Paul Cilliers, and Oliver Human, “Deconstruction and Complexity: A Critical Economy,” South African Journal of Philosophy 32, no. 3 (2013): 262–63.

[1] Tsoukas and Hatch, “Complex Thinking, Complex Practice: The Case for a Narrative Approach to Organizational Complexity,” 988.

[2] Jochen Fromm, “Types and Forms of Emergence,” ArXiv: Adaptation and Self-Organizing Systems, June 13, 2005, 1–23; Hempel and Oppenheim, “On the Idea of Emergence”; John Holland, Emergence: From Chaos to Order (Reading, Massachusetts: Perseus Books, 1998); Paul Lewis, “Peter Berger and His Critics: The Significance of Emergence,” Springer Science+Business Media, Symposium: Peter Berger’s Achievement in Social Science, 47 (April 1, 2010): 207–13.

[3] Tsoukas and Hatch, “Complex Thinking, Complex Practice: The Case for a Narrative Approach to Organizational Complexity,” 988.