Blogs

Thoughts on Human-Machine Teaming, AI and Changing Warfare… (Part I)

by : Ben ZweibelsonOriginal post can be found here: https://benzweibelson.medium.com/thoughts-on-human-machine-teaming-ai-and-changing-warfare-part-i-32445a5c938a

To a computer, a millisecond is an eternity, but to us it’s nothing… Human speech to a computer will sound like very slow tonal wheezing, kind of like whale sounds. Because what’s our bandwidth? A few hundred bits per second, basically… maybe a few kilobits per second if you’re going to be generous?

– Elon Musk[1]

The most important competition is not the technological competition, although one would clearly want to have superior technology if one can have it. The most important goal is to be the first, to be the best in the intellectual task of finding the most appropriate innovations in concepts of operation and making organizational changes to fully exploit the technologies already available and those that will be available in the course of the next decade or so… Indeed, being ahead in concepts of operation and in organizational arrangements may be far more enduring than any advantages in technology or weapon systems embodying them, and designing the right weapon systems may depend on having good ideas about concepts of operations.

-Andrew Marshall[2]

Warfare has always been changing as humans develop new ideas, technology and otherwise expand their range of abilities to manipulate reality to their advantage and creativity. Just as Homo sapiens proves astoundingly adaptive and clever in how to produce art, beauty and generously extend quality of life for the species, they continue to also be devastatingly capable of conjuring up evermore horrific and powerful ways to engage in organized violence against those they are in competition or conflict with. Yet the twenty-first century is wholly unique in that humanity now has the technological keys to unlock something previously unreachable. Civilization, human existence, and perhaps war itself may move into what previously could only be captured in fantasy, science fiction, or ideological promises and magic.

We are poised, if we can just survive the next few decades where all sorts of modern existential threats remain horrifically available- a new chapter in humanity and organized violence. Indeed- Homo sapiens will shift from constructing ever sophisticated and lethal means to impose behavior changes and force of will (security, foreign policy and warfare) to one where the means become entirely dissimilar, emergent ends in themselves. Our tools of war will be able to think for themselves, and think about us, as well as think about war in starkly dissimilar, likely unhuman terms. Unlike when we split the atom and quickly weaponized that technological marvel, there will not be the same control and command of weapons that can decide against what we wish, or even what we might be able to grasp in complex reality.

Humans will transition from ever-capable masters of increasingly sophisticated war tools toward less clever, less capable and insufficient handlers of an increasingly superior weaponized capability that in time will elevate, transform or potentially enslave (or eliminate) Homo sapiens into something different… possibly unrecognizable. War, as a purely human construct that has been part of humanity since inception, will change as well. These changes will not occur overnight, nor likely in the next decade or two, which sadly renders such discussions out of the essential and toward the fantastical. For the military profession, this reinforces a pattern of opting to transform to win yesterday’s war faster instead of disruptively challenging the force to move away from such comfort and familiarity toward future unknowns that erode or erase favorite past war constructs.

This is a thought piece designed not to debate contemporary matters of foreign policy, defense and warfare as artificial intelligence continues to expand in scale, scope and purpose. Instead, this article addresses the distinct possibilities that, given the continued acceleration of technological abilities, speed of learning and apparent patterns of scientific discovery with respect to artificial intelligence. The next century will not be like past periods of disruptive change such as the development of firearms, the introduction of internal combustion engines, or even the arrival of nuclear weapons. While today’s semi-autonomous cruise missile cannot suddenly decide to go study poetry or join a anti-war protest, future AI systems in the decades to come will not be bounded by such limitations. As such, critics might dismiss such thoughts outright as science fiction claptrap that is inapplicable to contemporary concerns such as the Russian invasion of Ukraine or the saber-rattling of China over Taiwan. Such a reaction misses the point, as the AI enabled war tools of this decade are like babies or toddlers to what will likely develop several decades beyond our narrow, systematic viewpoints. Militaries perpetually chase the next ‘silver bullet’ and secure funding to conduct ‘moon shots’ and these already include advanced AI weaponized systems that may replace most every human operator on today’s battlefield.

Yet when we seek to develop new weapons of war without putting in the necessary long-term, philosophical work on where we might end up, we fall into the trap that Der Derian warns of for societies excited about new technologies but uninterested in engaging in deep philosophical ponderings on the consequences of those new war tools:

When critical thinking lags behind new technologies, as Albert Einstein famously remarked about the atom bomb, the results can be catastrophic. My encounters in the field, interviews with experts, and research in the archives do suggest that the [Military Industrial Media Entertainment Network], the [Revolutions in Military Affairs] and virtuous war are emerging as the preferred means to secure the United States in highly insecure times. Yet critical questions go unasked by the proponents, planners, and practitioners of virtuous war. Is this one more attempt to find a technological fix for what is clearly a political, even ontological problem? Will the tail of military strategy and virtual entertainment wag the dog of democratic choice and civilian policy?[3]

This article presents a framing of how nations currently understand the ever-developing relationship between themselves and artificial intelligent enabled machines on the battlefields of today, and where and how those likely will shift in the decades to come. Some developments will retain nearly all of the existing and traditionally recognized hallmarks of modern warfare, despite things speeding up or becoming clouded with disturbingly unique technological embellishments to what remains a war of political and societal desires to change the behaviors and belief systems of others. Other paths lead to entirely never-before-seen worlds where humans become increasingly delegated to secondary positions in future battlefields, and perhaps booted off those fields entirely. War itself, as a human creation, may cease to be human… and morph into constructs alien or incomprehensible to the very creators of organized violence for socially constructed wants.

Over Forty Centuries of Precedence: War is a Decidedly Human Affair

Humans have for tens of thousands of years curated and inflicted upon one another a specific sort of organized violence known as war that otherwise does not exist in the natural world. Over 100,000 years ago, early humans harnessed fire and began a gradual journey toward ever-increasingly sophisticated societies. Change occurred gradually, with agriculture and the establishment of cities commencing around 10,000 years ago… this would produce the first recorded wars that differed from other types of violence.[4] The invention of writing (5,000 years ago) would shift oral accounts of these wars into more refined, structured forms that could be studied as well as extended beyond internalization of each living generation.

Yet across these thousands of generations of Homo sapiens that would collectively produce modern societies of today, change occurred quite slowly. Muscle and natural power (wind, fire, water) was the primary energy source for much of the collective human experience of war, with technological advancements only occurring in the last several hundred years with the invention of scientific methods and the Industrial Revolution that followed. Fossil fuels replaced muscle power, and the chemical power of gunpowder would replace edged weaponry with bullets, artillery and more. Steam locomotion gave way to faster systems such as internal combustion engines and eventually nuclear power. Technology as well as organizational, cultural and conceptual things have changed dramatically across this vast span of time, but humans have forever remained the sole decision-maker in every act of warfare until very recently. This is where things will accelerate rapidly, and potentially we may be entering the last century where humans even matter on future battlefields at all.

As soon as early humans realized how to manipulate their environment through inventing tools, they gained an analog function to greatly increase their own lethality to include waging war upon one another. The tools have indeed changed, yet the relationship of the human to the tool has remained firmly in a traditional ‘ends-ways-means’ dynamic. Humans use technology, communication and organization to decide and act to attempt to accomplish goals through various ways and using a wide range of means at their disposal. Until the First Quantum Revolution that would coincide with the Second World War, humans were the sole decision-makers at the helm of quite sophisticated yet entirely analog machines of war.[5] Once computers first became possible (beyond earlier analog curiosities), humans began to gain something new within their decision-making cycle for warfare activities from the tactical up through even grand strategic levels- the artificially intelligent machine partner. At first, such systems were cumbersome, slow and could only perform calculations, but over time they have migrated into central roles for how modern society now depends on this technology for a wide range of effects.[6]

Artificial intelligence has many definitions, and modern militaries often are preoccupied with narrow subsets of what AI is and is not, according to competing belief systems, value sets as well as organizational objectives and institutional factors of self-relevance. Peter Layton provides a broad and useful definition with “AI may do more- or less- than a human… AI may be intelligent in the sense that it provides problem-solving insights, but it is artificial and, consequently, thinks in ways humans do not.”[7] Layton considers AI more by the broad functions such technology can perform than by its relationship to human capabilities. This indeed is often how current defense experts and strategists prefer to frame AI systems in warfare; the human is teamed with a machine that provides augmentation, support and new abilities to perform some goal-oriented task that non-AI enabled warfighters would be insufficient or less lethal performing.

Artificial intelligence is also broadly distinguished into whether it is narrow or general with respect to human intelligence. Narrow AI equals or vastly exceeds what even the best human is capable of doing for specific tasks within a particular domain, and only in clearly defined parameters that are unchanging. Narrow AI can now beat the best human players of chess and other games, with IBM’s Watson defeating the best Jeopardy trivia game players in 2011 as an example. However, narrow AI is fragile, and if the rules of the game were changed or the context transformed, the narrow AI programming cannot go beyond the limits of the written code.[8] General AI, as a concept and benchmark yet realized in any existing AI system, must equal or exceed the full range of human performance abilities for any task, in any domain, in what must be a fluid and ever-changing context of creativity, improvisation, adaptation and learning.[9] Such an AI is decades away, if ever possible. Just as likely, a devastating future war waged with weaponized AI short of general intelligence could knock society back into a new Stone Age, or perhaps humanity might drift away from AI-oriented technological advances seeking general AI capabilities.[10]

AI is constantly being developed, with many military applications already well established and those on the immediate horizon for battlefields in the next decade. Much of what currently exists was produced in what is termed ‘first-wave AI’- narrow programming created in conjunction between the computer designers writing the code and the experts in the field or task that the narrow AI system is attempting to excel at. More recently, ‘second-wave AI’ uses machine learning where “instead of programming the computer with each individual step… machine learning uses algorithms to teach itself by making inferences from the data provided.”[11] Machine learning is powerful, working in a special way where human programmers do not have to set it up… but this creates the paradox that machine learning quickly can exceed the programmer’s ability to track and understand how the AI is learning.[12] This machine learning can occur in either a supervised or unsupervised methodology, where supervised learning systems are given labelled and highly curated data. This is time and resource intensive, but supervised machine learning can achieve extremely high performance in narrow applications.

Unsupervised learning “lets the dog off the leash” and AI identifies patterns for itself, often moving in emergent pathways well outside the original expectations of the programmer. Layton remarks: “An inherent problem is it is difficult to know what data associations the learning algorithm is actually making.”[13] IBM’s Adam Cutler, in a lecture to military leadership at U.S. Space Command provided the story of how two Chatbots created by programmers at Facebook quickly developed their own language and began communicating and learning in it. The Facebook programmers shut the system down as they had lost control and could not understand what the Chatbots were doing. Cutler stated that “these sorts of developments with AI are what really do keep me up at night.”[14] His comment was both serious and simultaneously elicited audience laughter, as the panel question posed was “what sorts of things keep you up at night?”

While the instance of Chatbots going rogue with a new language might be overblown, Cutler and other AI experts do warn of the dangers of unsupervised learning in AI development, and caution that while anything remotely close to general AI intelligence is still far off in the future, there are profound ethical, moral and legal questions to begin considering today.[15] With this brief summary of AI put into perspective, we shall move to how the military currently understands and uses AI in warfare, and where it likely is morphing toward next.

How Human-Machine Teaming Is Currently Framed:

AI systems can operate autonomously, semi-autonomously or remain in the traditional sense where, just like your smart phone or smart device awaits your command, operate in an ‘at-rest’ or passive mode of activity. While it may seem unnerving that your Alexa device is perpetually listening to background conversations, it is programmed to scan for specific words that trigger clearly defined and quite narrow actions. While either ‘at-rest’ and proactive (semi-autonomous, autonomous) modes feature different relationship dynamics between the AI and humans, the following three are well recognized in current military applications of AI systems.[16]

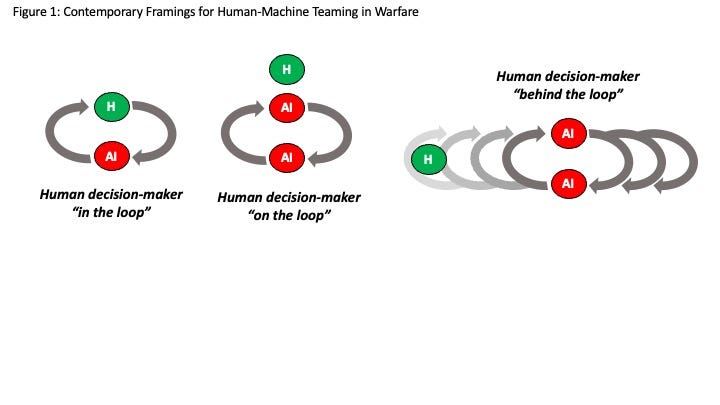

The original mode used since the earliest proto-computer enablers (as well as most any analog augmentation in warfare) positions the human as the key decision-maker in the cycle of thought-action-reflection. This is best known as ‘human-in-the-loop’ and expresses a dynamic where the human is central to the decision-making activities. The AI can provide exceptional contributions that exceed in narrow ways the human operator’s capabilities, but that AI does not actively ‘do’ anything significant without a design where the human intervenes and provides guidance or approval to act.

While the ‘human-in-the-loop’ remains the most common and, for ethical, moral and legal reasons the most popular mode of human-machine teaming for warfare, a second mode has also emerged with recent advances in AI technology.[17] Termed ‘human-on-the-loop’, the AI takes a larger role in decision-making and consequential action where the human operator is either monitoring activities, or the AI system is programmed to pause autonomous operations when particular criteria present the need for human interruption. In these situations, the AI likely has far faster abilities to sense, scan, analyze or otherwise interpret data beyond human abilities, but there still are ‘failsafe’ parameters for the human operators to ensure overarching control. An autonomous defense system might immediately target incoming rocket signatures with lethal force, but a human operator may need to make a targeting decision if something large like an aircraft is detected breaching defended airspace.

A third mode is only now coming into focus, and with greater AI technological abilities as well as increasing speed, scale and scope of new weaponry (hypersonic weapons, swarms and multi-domain, networked human-machine teams) a fully autonomous AI system is required. Termed ‘human-out-of-the-loop’, this differs from ‘dumb’ technology such as airbags that automatically activate when certain criteria are reached. True autonomous AI replace the human operator entirely, and are designed to function beyond the cognitive abilities of even the smartest human at what are currently narrow parameters. While many use ‘out-of-the-loop’ or ‘off-the-loop’, this article substitutes ‘behind-the-loop’ to introduce several increasingly problematic human-machine issues on future battlefields. Figure 1 illustrates these three modes below.

Author’s original illustration 2022

An autonomous AI system functioning in narrow or even general AI applications will, as technological and security contexts demand, likely move from supervised to unsupervised machine learning profiles that access ever growing mountains of data on the battlefield. Consider the average daily volume of new ‘tweets’ by worldwide Twitter users that average 330 million monthly users with 206 million of those users daily tweeting,[18] producing a daily average of over 500 million tweets worldwide in hundreds of different languages and countries.[19] It would be impossible for ‘human-in-the-loop’ monitoring, while ‘human-on-the-loop’ is also expensive, slow and often subjective. Twitter, like many social media platforms has many autonomous AI systems filtering, analyzing, and often taking down spam, fake accounts, bots and other harmful content without human intervention. This is not without risks and concerns, yet Twitter quality control is inevitably chasing behind the autonomous work of far faster AI systems that can scale to enormous levels beyond what an army of human reviewers could possibly match. However, fighting spam bots and fake accounts on Twitter is not exactly the same as autonomous drones able to decide on lethal weapon strikes independent of human operators.

Human-machine teaming currently exist in all of the three representations in Figure 1, with the preponderance in the first depiction where human operators remain the primary decision-makers coupled with AI augmentation. Drone operators, satellite constellations, advanced weapon systems that auto-aim for human operators to decide when to fire, as well as bomb-diffusing robots worked by remote control are common examples in mass utilization. Humans ‘on the loop’ abound as well, with missile defense systems, anti-aircraft and other indirect fire counter measures able to function with human supervision or just with human engagement for unique conditions outside normal AI parameters. The increased sophistication and abilities of narrow AI systems as well as the frightening speeds achieved by hypersonic and advanced weaponry, and the rise of devastating swarm movements that collectively would overwhelm any single human operator can now be addressed with autonomous weapon systems. These are not without significant ethical, legal and technological debate in security affairs.

How we express the human-machine teaming dynamic requires a combination of our conceptual models along with precise terminology that is underpinned by metaphoric devices. Below in Figure 1a, many of the terms currently in use as well as some new ones introduced in this article place the human ‘on the loop’, ‘off the loop’, ‘near the loop’, ‘behind the loop’ or potentially ‘under the loop’ depending on the context. Aside from the ‘in the loop’ configuration which has been the foundational structure for human decision-makers to direct command and control with an AI supporting system, all of these other placements for a human operator reflect changing technological potentials as well as the increasingly uncertain future of warfare. Such variation and uncertainty implies significant ethical, moral, legal and potentially paradigm-changing, existential shifts in civilization. The below graphic attempts to systemically frame some of these tensions, differences, and implications with how militaries articulate the human-machine team construct. The autonomous weapon acts as a means to a human designed end in some cases, whereas in other contexts the means becomes a new, emergent and independently designed ‘ends’ in itself, beyond human contribution. Note that in each depiction of a human, that operator is assumed to be an unmodified, natural version augmented by the AI system.

Author’s original graphic 2022

Yet the contemporary debates on how humans should employ autonomous weapon systems is just the latest evolution in human-machine teaming, where narrow AI is able to do precise activities faster and more effectively than the best human operator. Narrow AI applications in warfare illustrate the current frontier where existing technology is able to act with exceptional performance and destruction. Yet no narrow AI system can match human operators in general intelligence contexts which still compose the bulk of warfare contexts. For the next decade or two, human operators will continue to dominate decision-making on battlefields yet to come, although increasingly the speed and dense technological soup of future wars will push humans into the back seat while AI drives in more situations then previously. It is the decades beyond those that will radically alter the human-machine warfare dynamic, potentially beyond any recognition.

References:

[2] Andrew Marshall, “Some Thoughts on Military Revolutions- Second Version” (Office of the Secretary of Defense, August 23, 1993), 2–3.

[3] James Der Derian, “Virtuous War/Virtual Theory,” International Affairs (Royal Institute of International Affairs 1944-) 76, no. 4 (October 2000): 788. Der Derian introduces the term ‘MIME’ and ‘MIME-NET’ for the combination of media entertainment and the military industrial complex that he sees as fused together today.

[4] Predator-prey relationships already existed in nature before humans, as did individual acts of violence for a wide range of reasons and goals. Yet only humans, once able to communicate and organize into recognizable groups, tribes and societies would become capable of waging war.

[5] Michal Krelina, “Quantum Warfare: Definitions, Overview and Challenges,” Quantum Physics (New York: Cornell University, March 21, 2021), https://arxiv.org/abs/2103.12548.

[6] Lorraine Daston, Rules: A Short History of What We Live By (Princeton, New Jersey: Princeton University Press, 2022), 122–50.

[7] Peter Layton, “Fighting Artificial Intelligence Battles: Operational Concepts for Future AI-Enabled Wars” (Australian Defence College Centre for Defence Research, 2021), 3, https://tasdcrc.com.au/wp-content/uploads/2021/02/JSPS_4.pdf.

[8] Ben Goertzel, “The Singularity Is Coming,” Issues 98 (March 2012): 6; Jacob Shatzer, “Fake and Future ‘Humans’: Artificial Intelligence, Transhumanism, and the Question of the Person,” Southwestern Journal of Theology 63, no. 2 (Spring 2021): 130.

[9] Nick Bostrom, Superintelligence: Paths, Dangers, Strategies, paperback (United Kingdom: Oxford University Press, 2016); Shatzer, “Fake and Future ‘Humans’: Artificial Intelligence, Transhumanism, and the Question of the Person,” 131.

[10] Goertzel, “The Singularity Is Coming,” 4.

[11] Layton, “Fighting Artificial Intelligence Battles: Operational Concepts for Future AI-Enabled Wars,” 9.

[12] Shatzer, “Fake and Future ‘Humans’: Artificial Intelligence, Transhumanism, and the Question of the Person,” 133; Paul Scharre, Autonomous Weapons and the Future of War: Army of None, Norton Paperback (New York: W.W. Norton & Company, Inc., 2018), 83.

[13] Layton, “Fighting Artificial Intelligence Battles: Operational Concepts for Future AI-Enabled Wars,” 9.

[14] The author invited Cutler to lecture on AI and defense at the Spring 2022 U.S. Space Command’s Commander’s Conference at Peterson Space Force Base, Colorado. During Cutler’s lecture, he provided this example when asked in the “Q&A” portion on what keeps him up at night.

[15] Giampiero Giacomello, “The War of ‘Intelligent’ Machines May Be Inevitable,” Peace Review: A Journal of Social Justice 33 (2021): 282–83; Brian Molloy, “Project Governance for Defense Applications of Artificial Intelligence: An Ethics-Based Approach,” PRISM 9, no. 3 (2021): 108.

[16] Scharre, Autonomous Weapons and the Future of War: Army of None, 42–52.

[17] Aron Dombrovszki, “The Unfounded Bias Against Autonomous Weapons Systems,” Informacios Tarsadalom 21, no. 2 (2021): 15.

[18] Neal Schaffer, “The Top 21 Twitter User Statistics for 2022 to Guide Your Marketing Strategy,” May 22, 2022, https://nealschaffer.com/twitter-user-statistics/.

[19] Salman Aslam, “Twitter by the Numbers: Stats, Demographics & Fun Facts,” Statistics Blog, Twitter by the Numbers: Stats, Demographics & Fun Facts (blog), February 22, 2022, https://www.omnicoreagency.com/twitter-statistics/.